NVIDIA Lyra 2.0: Open Source 3D World Generation from a Single Image

Share this post:

NVIDIA Lyra 2.0: Open Source 3D World Generation from a Single Image

NVIDIA's Spatial Intelligence Lab released Lyra 2.0, an open source framework that converts a single photograph into a navigable, geometrically consistent 3D world. The system is licensed under Apache 2.0, permits commercial use, and ships with model weights on Hugging Face and code on GitHub.

Lyra 2.0, NVIDIA Spatial Intelligence Lab

From Image to Interactive World

Lyra 2.0 takes one input image, generates a camera controlled video walkthrough of the scene, and reconstructs that walkthrough into 3D Gaussian Splats and surface meshes. Those outputs load directly into real time rendering engines and physics simulators.

The system is built on Wan 2.1-14B, a Diffusion Transformer trained for video generation. It produces frames at 832×480 resolution using 35 denoising steps. A distilled variant reduces that to 4 steps for faster iteration without retraining.

Lyra 1.0, released in September 2025, introduced the core pipeline for 3D and 4D scene generation from single images. Lyra 2.0 extends that foundation to long horizon exploration, letting users navigate through large spatial regions while the model maintains geometric consistency across the entire sequence. Other open source world simulators approaching this problem include Lingbot World, which builds on Wan2.2 for camera controlled environment generation under the same Apache 2.0 terms.

Keeping the World Consistent

Two failure modes undermine long horizon 3D generation. Spatial forgetting occurs when the model revisits a distant region and hallucinates geometry rather than reproducing what it already generated. Temporal drifting happens when small per frame errors accumulate until the scene diverges from the original input.

Lyra 2.0 addresses spatial forgetting through geometry based frame retrieval. Instead of feeding historical frames directly as image conditioning, the system routes information exclusively through 3D geometry. It retrieves historical frames with maximum visibility to the target viewpoint and establishes dense correspondences via canonical coordinate warping.

Temporal drifting is reduced through self augmentation training, where the model trains on its own degraded outputs. This closes the gap between training conditions and inference behavior. FramePack temporal compression runs alongside this strategy to limit how far reconstruction errors propagate across extended sequences.

Diverse Scene Generation

Lyra 2.0 handles a wide range of environment types from single input images, including fantasy interiors, futuristic urban landscapes, outdoor terrain, and architectural spaces.

Surreal Eastern fantasy

Futuristic street terrace

Scene example

Scene example

On the DL3DV and Tanks and Temples benchmarks, Lyra 2.0 scores an LPIPS of 0.552, an FID of 51.33, and a style consistency of 85.07%. These figures measure perceptual quality, distribution fidelity, and visual coherence across generated frames.

Creating Simulation Ready 3D Environments with Interactive GUI

Lyra 2.0 ships an interactive GUI for scene exploration and camera trajectory planning. Users draw paths through the generated environment, and the model progressively extends the scene as the virtual camera moves forward.

Lyra 2.0 in NVIDIA Isaac Sim, delivery robot navigation

The GUI exports finished scenes directly to NVIDIA Isaac Sim for embodied AI and robotics training. A delivery robot navigating a new facility can be trained in a simulated version of that facility, built in minutes from a single photograph. The 3D Gaussian Splat export preserves full spatial geometry and texture detail for physics engine use.

Robot environment

Chinese temple

Staircase environment

Coastal scene

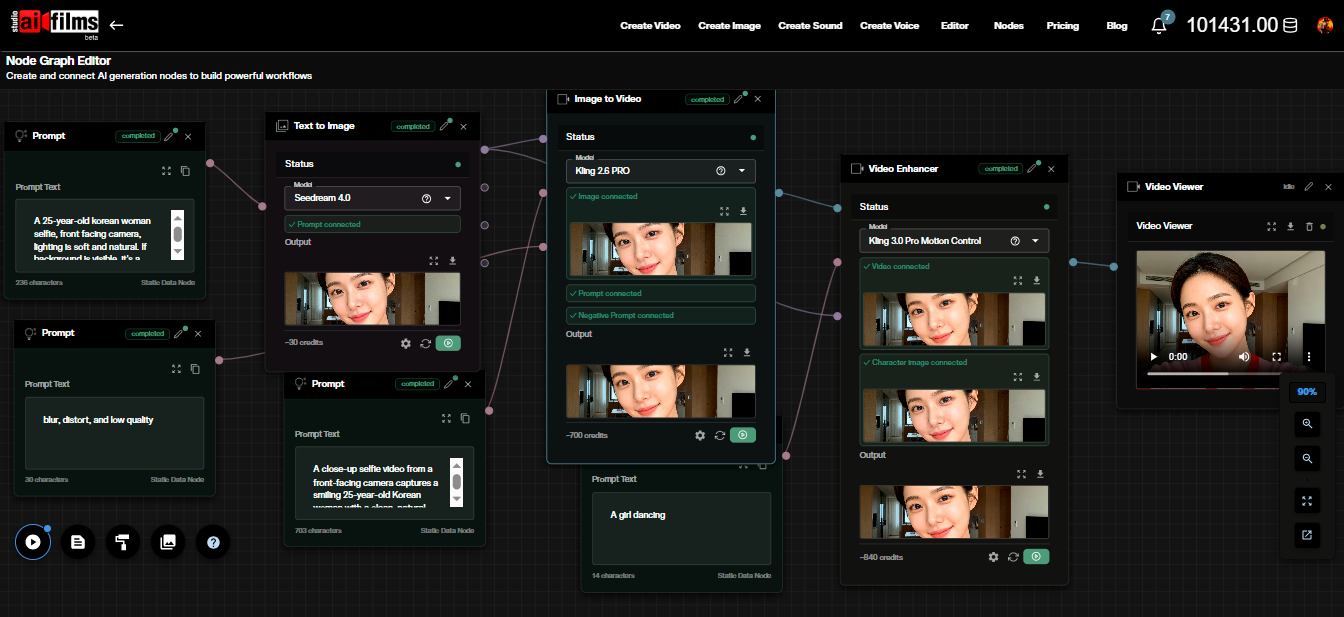

For filmmakers, the GUI offers a faster path to spatial previsualization than manual 3D modeling. Generate a scene from a location photograph, walk through it to plan shots, then export to a game engine. Explore more ways to generate and manipulate scenes in the AI FILMS Studio video workspace.

Open Source and Available for Commercial Use

Lyra 2.0 is released under the Apache 2.0 license, which permits commercial use, modification, and redistribution. Model weights are available on Hugging Face at nvidia/Lyra-2.0. Source code is on GitHub at nv-tlabs/lyra, with 927 stars and 55 forks at publication.

No managed inference endpoint exists at launch. Teams run the model locally on their own hardware. The Wan 2.1-14B base requires a GPU with sufficient VRAM for 14 billion parameter inference. NVIDIA LongLive, the lab's earlier release for real time interactive long video generation, shipped under non commercial terms. Lyra 2.0's Apache 2.0 license removes that restriction for commercial production pipelines.

Image to 3D approaches are converging across labs. Tencent's HunyuanWorld Mirror covers similar territory using Gaussian splatting for scene representation. Lyra 2.0 distinguishes itself with the anti forgetting and anti drifting mechanisms that sustain consistency across longer trajectories and with the Isaac Sim pipeline for simulation use.

Sources

arXiv: Lyra 2.0: Explorable Generative 3D Worlds

GitHub: nv-tlabs/lyra

Hugging Face: nvidia/Lyra-2.0

Project Page: research.nvidia.com/labs/sil/projects/lyra2

Continue Reading

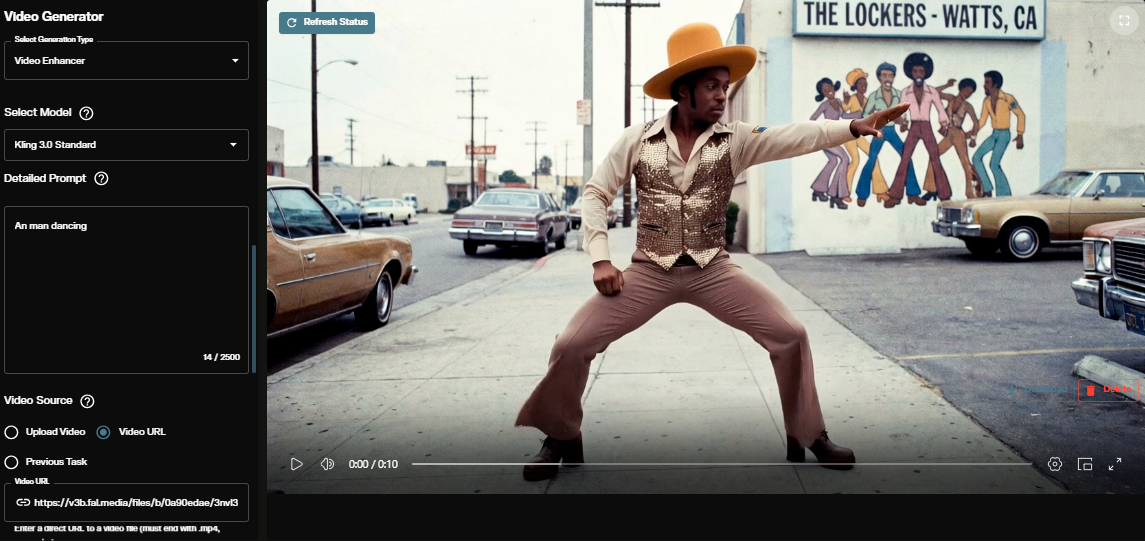

Video & LipSync

- Video Generator

- Text to Video

- Image to Video

- Start-End Frame to Video

- Draw to Video

- Motion Control

- Video Enhancer

- Video Upscaler

- Video to Video LipSync

- Audio to Video LipSync

- Image to Video LipSync

- Video FaceSwap

- Seedance 2

- OpenAI Sora 2

- Kling 3.0

- Kling O1

- Google Veo 3.1

- LTX 2.3

- Kling O1

- Hailuo AI

- Luma Ray

- Kling 3.0 Motion

- Topaz Upscaler

- InfiniteTalk Face Swap